I had the great opportunity to co-present with Ståle Hansen (Microsoft Teams Voice Expert) on a recent webinar (April 2022). The topic was about the off-the-shelf, software tools for researching and troubleshooting call quality issues for Microsoft Teams. We also discussed available options to improve telemetry data gathering and shorten the time needed to analyze Teams call quality problems and find the root cause. Even though I was familiar with most of these tools, like Microsoft CQD, I had never really thought about the complexity involved for a helpdesk engineer who must coordinate the efforts between different IT support groups to run all these tools, gather the necessary data, and analyze the problem to find a fix. It takes quite a while, as we discussed during the webinar.

In this blog I will provide highlights of the topics we discussed on the webinar and spotlight some important tips I received from Ståle for improving call quality performance for Microsoft Teams. If you’d like to watch the recording from this webinar, please use this link to the full overview page or take a look at the end of the post.

Monitoring End-to-End Performance for Teams Call Quality

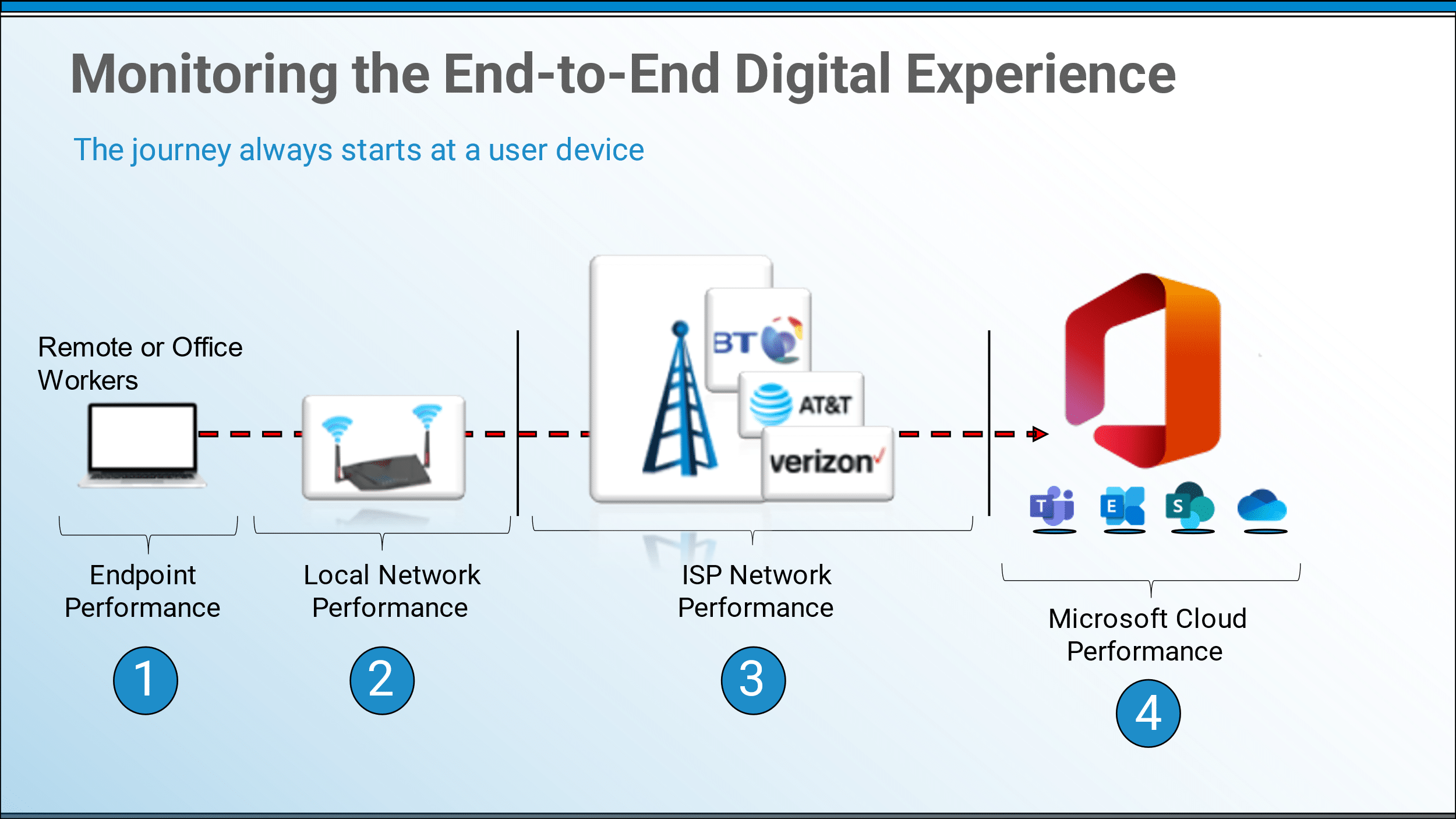

During the webinar, one of the most important slides that we showed attendees was about the four different segments of the end-to-end digital experience journey for Teams voice traffic. This sets the stage for the different tools that are needed to assess performance at each stage of this journey. And realize that this map shows the path for both office workers and employees working remotely – sometimes from their home office.

Four Segments of the Teams Voice Traffic Journey

The big difference between remote workers and office-based workers is the network performance monitoring shown in segments 2 and 3 above. Employees working remotely are accessing the Microsoft cloud from their unmanaged / unmonitored networks which are a blind spot for IT support groups. The network performance tools we discussed during the webinar are for office locations only, for corporate networks.

Four Microsoft Tools Where Network Subnets Matter

There are some great tools provided by Microsoft to help plan and monitor Teams enterprise voice deployments. To get the most out of the available Microsoft tools requires uploading information on different regions, sites, and subnets allotted to your corporate network configurations. This helps provide a more complete picture of network performance. Use this link for more information on how to upload site information.

This data provides more detailed information to the available Microsoft monitoring and reporting tools, including the Call Quality dashboard (CQD), Productivity Score, Teams Network Planner, and Call Analytics.

Understanding Microsoft CQD Measurements and Call Quality Ratings

During the webinar we also covered some useful information on the Microsoft CQD tool and explained how it ranks calls with “Poor Quality”. CQD is one of the most common Microsoft tools that corporations rely on for monitoring Teams VoIP call quality. It provides valuable details on usage across the tenant so you can track the number of Peer-to-Peer Calls, Group Calls / Meetings, and PSTN Traffic. CQD also measures call quality performance based on five key metrics with quantified thresholds to grade the impact on users. If these limits are breached during a call it is flagged as “Poor Quality” and requires additional research and inspection using other tools.

- Network Packet Loss Rate – should not be greater than 10%.

- Round Trip Time (RTT) – reflects the number of milliseconds that it takes a network voice packet to make a round trip between two people who are speaking with one another. Should not be greater than 500ms.

- Amount of Audio Traffic Jitter – jitter is a variation in the amount of time that packets take to reach their destination. Excessive jitter negatively impacts a stream’s audio quality. If a stream’s average jitter is found to be greater than 30 milliseconds, then the call is considered to be of poor quality.

- Audio Degradation Average – based on the amount of network jitter and packet loss, as it relates to an audio stream. If the average audio degradation is found to be above 1.0, then the stream is classified as being of poor quality.

- Ratio Concealed Average – compares the total number of audio frames to the number of samples that were generated by a packet loss healing mechanism, and then determines a ratio. If this ratio is above 0.07 (7%) then it is interpreted as poor audio quality

If just one of the above metrics exceeds the documented threshold, the specific Teams call stream will be classified as poor. However, finding the root cause of the problem for the Audio Jitter, or the long RTT, or the Packet Loss, requires more investigation using tools that measure network throughput on different segments or the processing speeds on the endpoint devices. That’s where the hard work and the extended investigation comes into play.

IT Support Groups Involved in Call Quality Troubleshooting

The biggest ‘Aha’ moment during our discussion with Ståle was about the multiple IT support groups involved in the investigation, analysis and troubleshooting for reported VoIP call quality issues. A case study that panagenda referenced showed that one of their enteprise customers was spending an average of 14-hours, over 4-days, to research and remediate a helpdesk trouble ticket related to Teams call quality. This sounded shocking to Ståle when we first discussed the numbers, but after hearing the entire story from the customer it made complete sense. The assessment for a helpdesk trouble ticket relating to Teams call quality was managed by a lead support engineer, but they needed to bring in assistance from three different IT support groups, including:

- Desktop Support

- Network Engineering

- and, Microsoft 365 / Teams Voice support

The challenge was related to the end-to-end digital experience journey that I described above. These different IT support departments had to run their own data gathering tools to measure the performance from each of the Teams enterprise voice traffic segments and see if they could spotlight the root cause, the chokepoint, that impacted the call quality performance. Was it the end user’s computer – the endpoint, or was it their local network bandwidth, or was it the ISP / Internet speed, or was it the Microsoft cloud service?

Gathering all that information and pulling the data together to enable these three IT support groups to collaborate on their findings took time. On average it took this customer 14-hours, and sometimes they never found the problem, sometimes the log data was already gone. That’s when I knew that this was an interesting topic for future discussions with other Teams voice experts. How are IT operations groups troubleshooting Teams call quality issues today? How long does it take them to find the root cause? And how many IT engineers does it take to do the analysis?

During the webinar we did a 10-minute demonstration of our TrueDEM EPM solution. It aggregates all the telemetry data needed, including Microsoft CQD metrics, and makes it available in a single UI for fast troubleshooting. We showed how a single IT support engineer can research an issue and find the problem in minutes, instead of days.

An Important Best Practice for Improved Call Quality

During the webinar we did come to one important conclusion, one best practice, that can help all IT operations groups that are relying on Teams as their enterprise voice platform. Get your Teams voice traffic into the Microsoft media relays as quickly as possible! The Microsoft global network is SUPER fast, on the order of 160 Terabits per second, with more than 185 global network POPs and over 165,000 miles of lit fiberoptic and undersea cable systems.

To accomplish this fast onramp to the Microsoft cloud network you should always try to route Teams traffic directly with no bypass or hijacking. The biggest sticking points causing those issues are related to networking configurations for VPN routing and traffic security inspections. Teams voice traffic should be exempted from that speed-reduction routing, including the use of the following options:

- Setup VPN Split Tunnel Configurations for all Teams traffic

- Whitelist Teams Network Traffic for Passthrough (bypass network traffic inspections and proxy settings)

Microsoft Cloud Accelerator Workshops – Modernize Communications, or Hybrid Meetings

I would like to thank Ståle Hansen for joining me for this technical discussion on Teams voice troubleshooting. One other thing I would like to pass along that we discussed with Ståle on the webinar. If you would like to understand more about optimizing your Teams voice deployment there is a free workshop series provided by Microsoft. The consulting services group at CloudWay is one of the partners authorized by Microsoft to perform these 1-day workshops. If you would like to hear how Teams voice services can modernize your unified communications and collaborative meetings, then please contact the experts at CloudWay to check on eligibility. And once again, there is no charge for these workshops. They can be held either on-premises at your location or managed virtually.

Don’t miss the next webinar with Microsoft RD & Teams MVP Ståle Hansen, June 15, 2022. In this technical discussion with Microsoft Teams UC experts on the performance redlines that cannot be crossed, we will review actual metrics from our current customers including over 1 million endpoints.